When Microsoft was launching Azure during the last years, the team invented a service called Azure WebSites in the early days. This service was an amazing step in the direction of making a very complex topic as easy as possible. Just today we are looking for a similar thing in Amazon Web Services without luck.

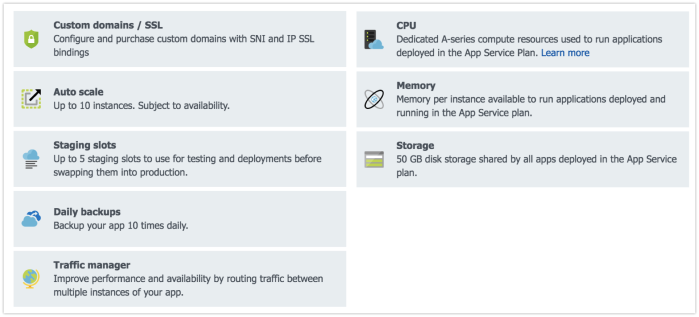

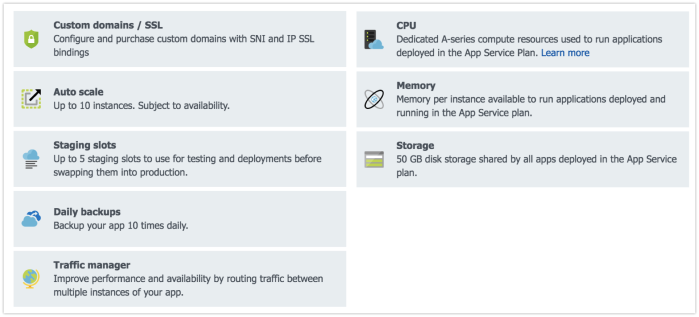

Nowadays the service is called Azure App Services and can be called the backbone of Microsofts strategy to make the life of Software as a Service developers easier. Definitely Azure App Services is the solution for headache free operations of Web Applications because you get so many important features for free e.g.:

- Load Balancing

- SSL Certificate Management

- Patch Management and Operating System Updates

- Deployment Slots

Besides that, an Azure App Service is fault tolerant even if you operate just a single instance and allows you to scale out based on many indicators like CPU, RAM or Queue Length. Adding WebJobs to App Services was the missing piece to bring the workload of a Web Application into the background, e.g. the processing of uploaded images or data reports which needs to be prepared.

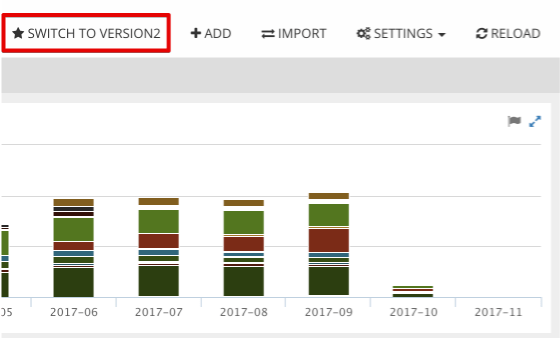

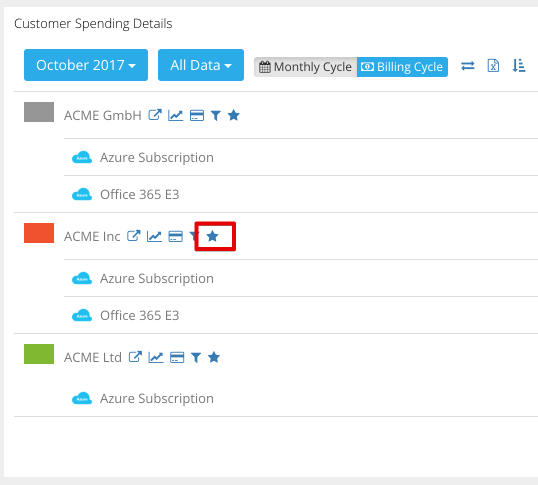

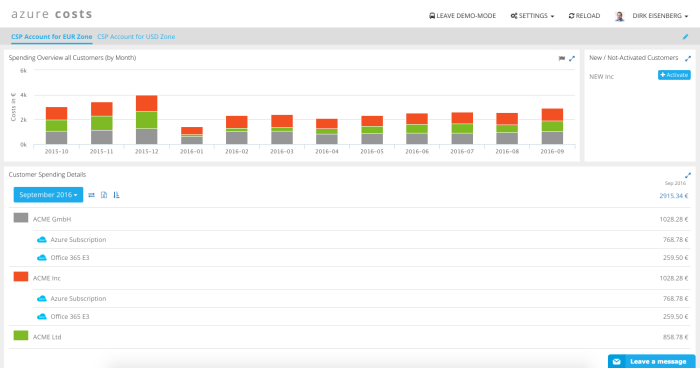

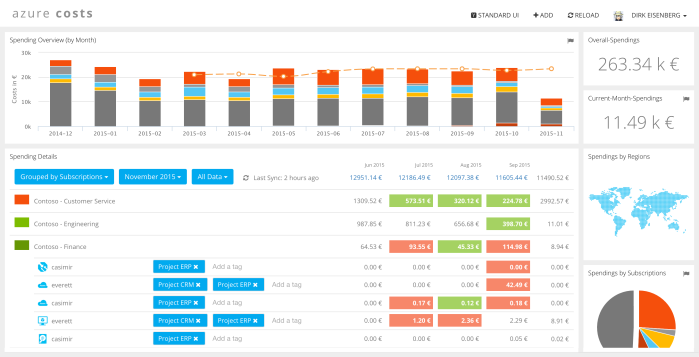

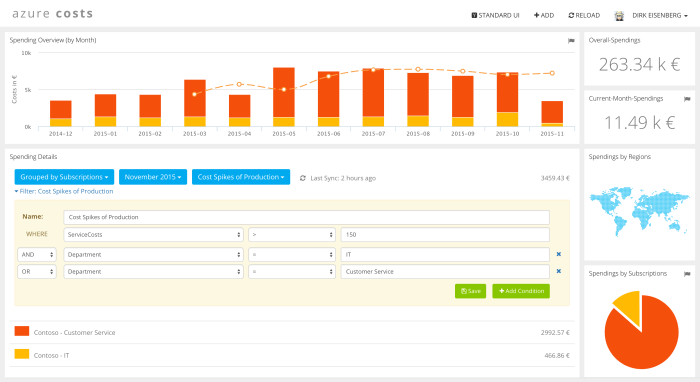

As soon as Software as a Service offerings are growing (e.g. Azure Costs) the background work is something you could start worrying when using Azure App Services. The scale out features look great for small apps but have their pitfalls e.g. you can only scale out on instance level and not on job level so when you want to increase the amount of workers in App Services you need to increase the instance count. The other challenge we had is that the WebJob shares CPU and RAM with the Website process. This could influence the perceived performance of your application. This challenge could be covered by operating several App Service Plans in parallel and let the WebSite run in a different plan to the background worker.

If you are at this point the costs perspective comes into the game. Operating a App Services Plan which just runs WebJobs is a great thing but the reality is that you don’t need all the nice WebSite features and normally you want to have more granular ways for scale out. We at Azure Costs started searching for an alternative which runs as well in Azure because we would like to keep the traffic in one data center to keep an eye on these costs as well. During this research we identified that container technology could be very helpful because it allows us to spin another 20 containers just for scale out in a specific area.

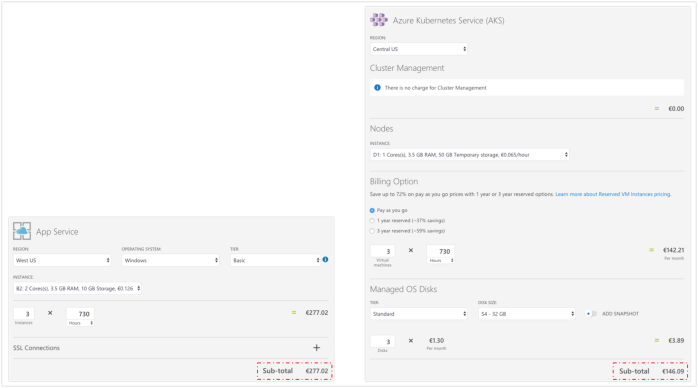

Microsoft offers AKS (Azure Kubernetes Services) which gives you a fully managed Kubernetes Cluster running on Azure without having the pain to operate control plans. So it becomes very attractive also for smaller clusters consistent out of 2 up to 5 servers. Let’s review if and when yes, how AKS can cover the new requirements.

Requirement 1: Background Workers can’t influence the Web Site Host directly

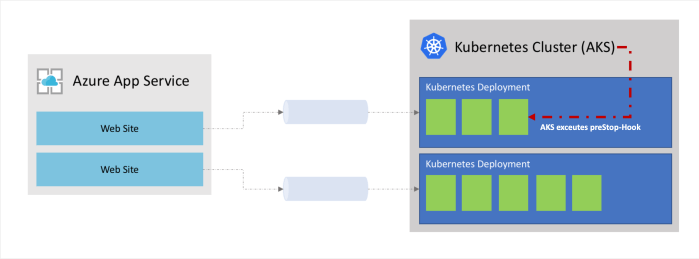

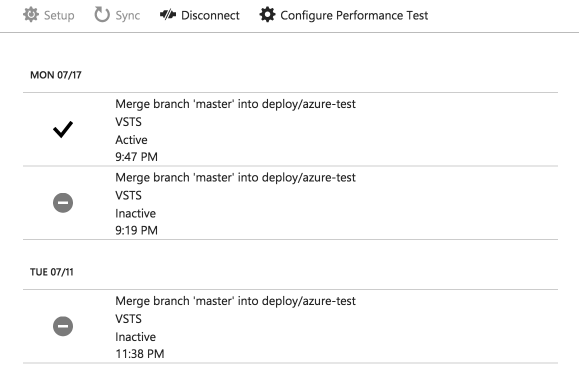

We decided to let the WebSite be on an Azure App Service plan and use all the features like SSL certificates, Instance based Scale out based on CPU and RAM or Load Balancing. So by design the Kubernetes Cluster just runs on different hosts as the WebSite and they never influence each other on a direct way.

Requirement 2: Scale-Out is possible on a per job level

Kubernetes relies on docker containers which means what was a WebJob before, now becomes a container and it’s possible to scale out the containers as part of a deployment definition easily. Kubernetes has also native support for Azure to scale out the underlying servers called node pools in Azure. So the system becomes fully flexible and can breath as you need.

Requirement 3: Dedicated Resource Allocation for Job-Worker are possible

Azure App Services is totally shared which means the jobs are fighting with the Webserver for resources like RAM and CPU. It’s even not possible to allocate a minimum amount of CPU tickets to a dedicated worker. It could happen very easily that you overload the CPU when a lot of background work happens. Kubernetes has a different concept and strict Quality of Service classes (QOS) to avoid this topic and make it manageable.

Requirement 4: Patch-Management comes for free

Patch-Management is somehow the downside of AKS because you are operating virtual machines in the cloud and it’s up to you to trigger updates and so on. We decided to follow the idea of immutable infrastructure which means when we need an update we just re-deploy the whole cluster and remove the old one. It gives use all the capabilities Microsoft invests in his virtual machine image gallery for free.

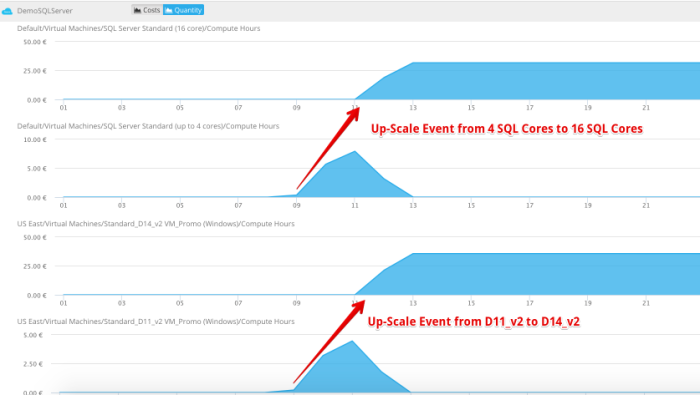

Finally we defined for us that a good structure is to bail out all background work into a growing Kubernetes Cluster and dockerize our whole background logic. Since we did the change we have full control of resource allocation and can easily scale out on a per job level which means more people need on demand reports which should not block the IIS threads the system scales up this group of workers. Over the night when we import tons of data, the system scales up these kind of workers very easily.

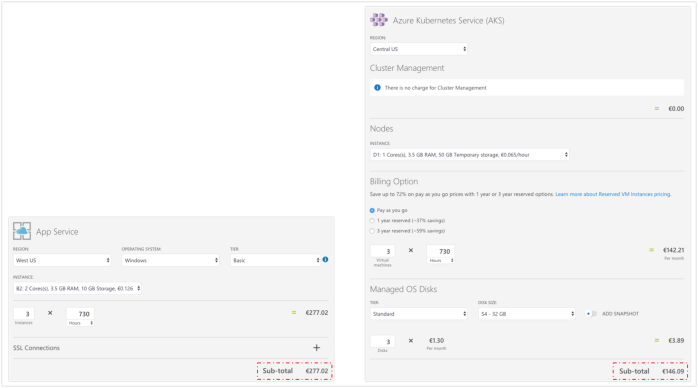

Reviewing the costs speaks also a positive language. As Microsoft is not charging you for cluster management you just pay the virtual machines, and this means normally machines on premium storage for approx. 90% of the price of the corresponding App Service plan. Normally the machines can have double of the RAM the App Service Plan offers.

I can recommend the combination of Azure App Service Plans for your WebSite and WebServices and a Managed Kubernetes Cluster know as AKS for all the work behind the scene. How do you think about this architecture, did I miss something? Do you follow a different approach?